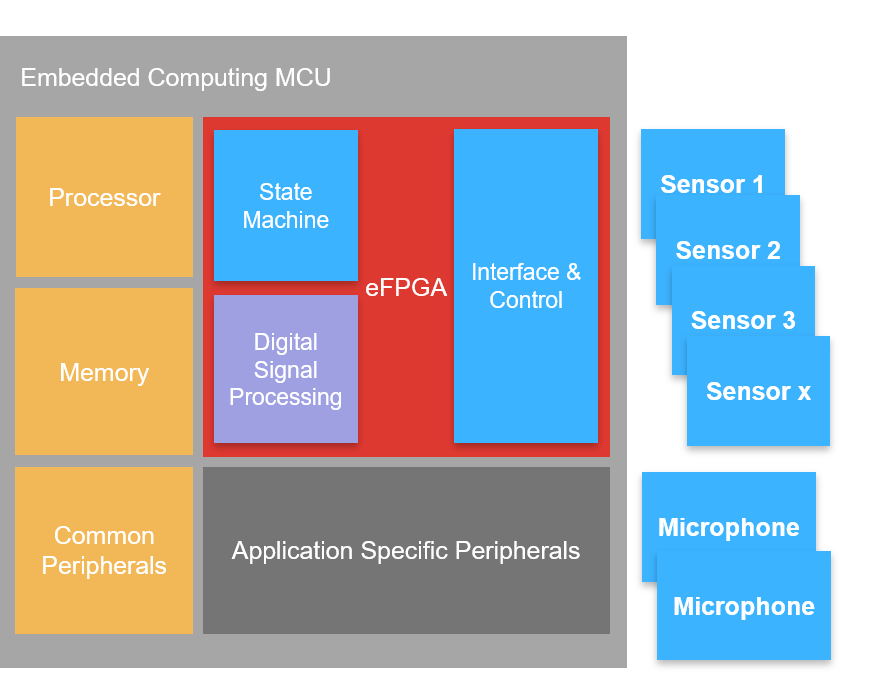

QuickLogic’s latest eFPGA architecture designed from the ground up for MCU/SoC/custom ASIC applications - optimized for edge and endpoint AI processing, military, aerospace and automotive

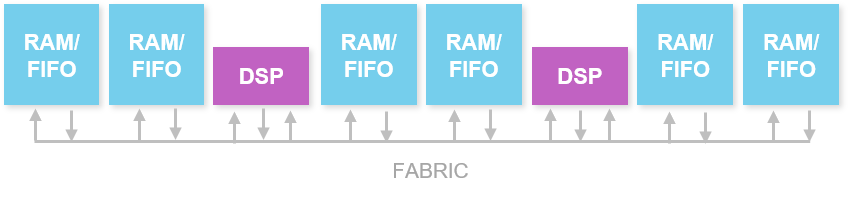

QuickLogic’s newest eFPGA architecture, designed for embedding into low power SoCs and MCUs, the eFPGA architecture includes hierarchical logic and routing plus hardware acceleration building blocks such as embedded memory and multiplier-accumulators (MACs).

Features

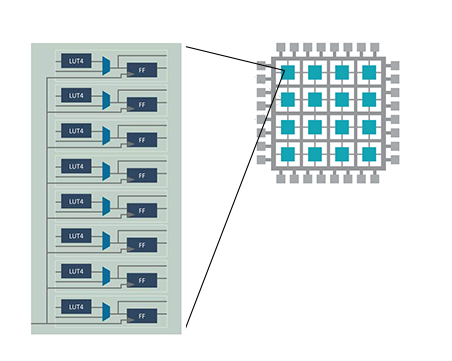

- Homogenous Fabric architecture

- Super Logic Cell (SLC) consisting of

- 8 LUT4

- 8 registers

- Hierarchical routing networks for optimum performance and power consumption

- eFPGA arrays range from 8x8 to 64x64 SLCs

- ~500 LUT4s to 32K LUT4s

- Built in Block RAM

- Fracturable Multiplier-Accumulates (MACs)

Benefits

- Expand addressable market of your SoC through on-chip reprogrammability

- Market test new features and functions before committing to hard gates or custom silicon

- Protect your ASIC by using the eFPGA as a reprogrammable isolation area for security or authentication IP

- Extends product longevity and hardware-based Continuous Integration (CI)

Have questions? Contact us to learn more.

Contact UsMore About QuickLogic FPGA Software

Learn MoreResources

QuickLogic ArcticPro 3 eFPGA IP and FPGA Software for Samsung Foundry 28FDS (28nm FD-SOI)

eFPGA use cases

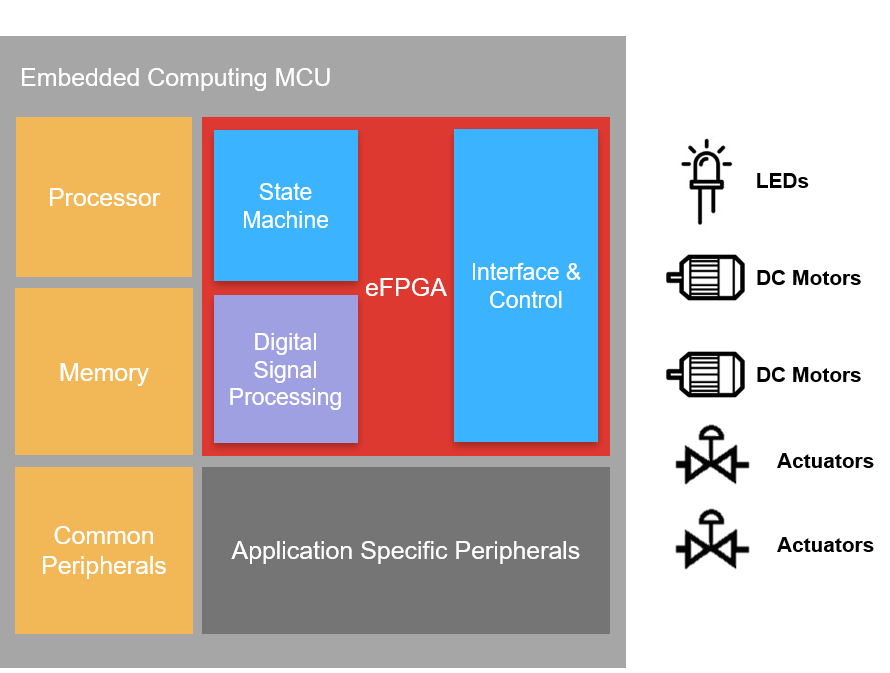

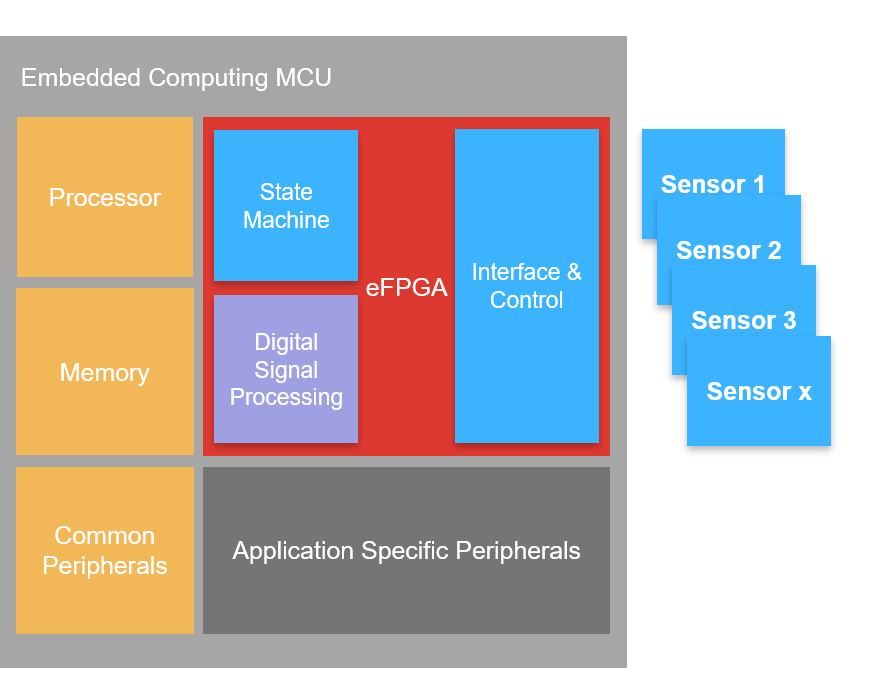

Hardware Offloaded/Accelerated DSP

- DSP functions (e.g. filters) are an integral part of many edge applications such as audio signal processing and voice recognition

- In lieu of running all of those DSP functions as software on a general purpose processor, certain functions can be offloaded or accelerated to an eFPGA with tightly coupled DSP blocks

- The benefit is a platform with more computational capability and flexibility to change implementation based on requirements

I/O Expansion

- Choosing the right specification and combination of peripheral I/O can challenging

- With integrated eFPGA, new & evolving I/O standards can be implemented post-tape out to extend the life of a mask set

“Real Time” I/O Control

- Some system components need precisely controlled I/O timing to operate correctly

- Implementing this ‘hard real time’ with software can be challenging since processors are a shared resource

- With integrated eFPGA, precise I/O timing can be offloaded from the processor so that CPU loading is decoupled from I/O timing

Hardware Offloaded/Accelerated AI

- AI Inferencing that use neural networks tend to benefit from heterogeneous architectures that have parallel processing capability, particularly ones that can process millions of Multiply-Accumulate operations per second

- eFPGA with tightly coupled DSP blocks, and a direct path to an integrated CPU (e.g. ARM or RISC-V) are very efficient at implementing this architecture

- The benefit is a more platform with more computational capability (in terms of MACs) and flexibility to change the neural network implementation based on requirements